While there are several playgrounds to try Foundation Models, sometimes I prefer running everything locally during development and for early trial and error experimentations. This post explains how to set up the Anaconda environment via Docker and how to run the small Flan-T5 model locally.

FLAN-T5

FLAN-T5 is a Large Language Model open sourced by Google under the Apache license at the end of 2022. It is available in different sizes - see the model card.

- google/flan-t5-small: 80M parameters; 300 MB download

- google/flan-t5-base: 250M parameters

- google/flan-t5-large: 780M parameters; 1 GB download

- google/flan-t5-xl: 3B parameters; 12 GB download

- google/flan-t5-xxl: 11B parameters

FLAN-T5 models use the following models and techniques:

- The pretrained model T5 (Text-to-Text Transfer Transformer)

- The FLAN (Finetuning Language Models) collection to do fine-tuning multiple tasks

FLAN-T5 is especially good in reasoning. Let’s look at an example:

1

2

3

4

5

6

7

8

Input:

Answer the following yes/no question by reasoning step-by-step.

Can you write a whole Haiku in a single tweet?

Output:

A haiku is a japanese three-line poem.

That is short enough to fit in 280 characters.

So the answer is yes.

Setup

Run Anaconda in a container:

1

2

mkdir workspace && cd workspace

docker run -i -t -p 8888:8888 -v "$PWD":/home --name anaconda3 continuumio/anaconda3

From within the container install the dependencies and run Jupyter:

1

2

3

4

5

pip install transformers

pip install transformers[torch]

pip3 install torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cpu

conda install pytorch torchvision torchaudio cpuonly -c pytorch

jupyter lab --ip='0.0.0.0' --port=8888 --no-browser --allow-root --notebook-dir=/home

Open Jupyter in your browser via the link which you’ll find in the terminal.

After you stop Jupyter and the container, you can restart everything:

1

2

3

docker start anaconda3

docker exec -it anaconda3 /bin/bash

jupyter lab --ip='0.0.0.0' --port=8888 --no-browser --allow-root --notebook-dir=/home

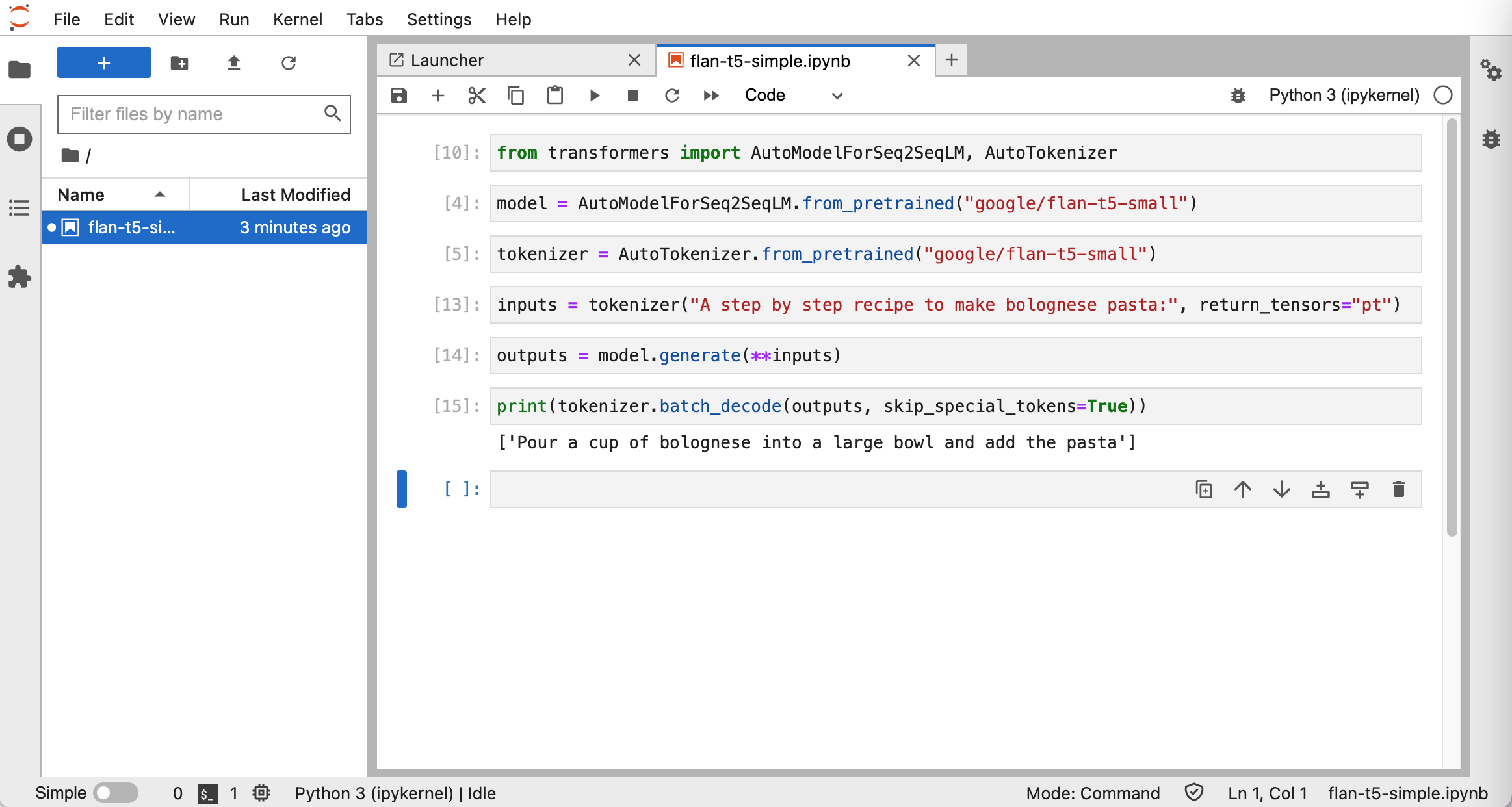

Sample

The following snippet is a simple sample which uses the pretrained model:

1

2

3

4

5

6

7

8

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

model = AutoModelForSeq2SeqLM.from_pretrained("google/flan-t5-small")

tokenizer = AutoTokenizer.from_pretrained("google/flan-t5-small")

inputs = tokenizer("A step by step recipe to make bolognese pasta:", return_tensors="pt")

outputs = model.generate(**inputs)

print(tokenizer.batch_decode(outputs, skip_special_tokens=True))

The snippet prints “Pour a cup of bolognese into a large bowl and add the pasta”.