Watson Machine Learning can be used by data scientists to create models which can be managed and deployed on the IBM Cloud. Developers can access these models from applications, for example to run predictions.

I blogged about a sample scenario to predict whether people would have survived the Titanic accident based on their age, ticket class, sex and number of siblings and spouses on board the Titanic.

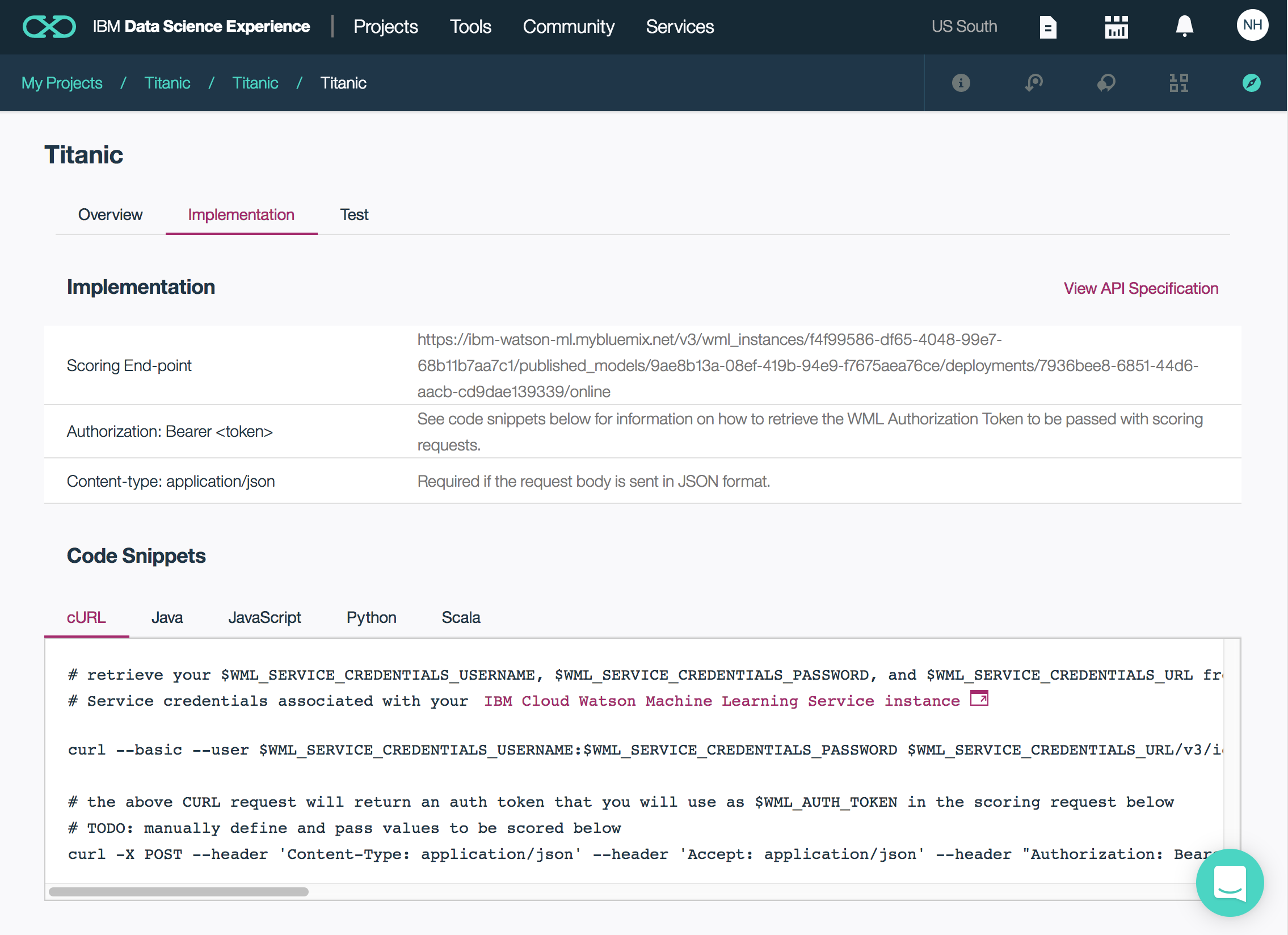

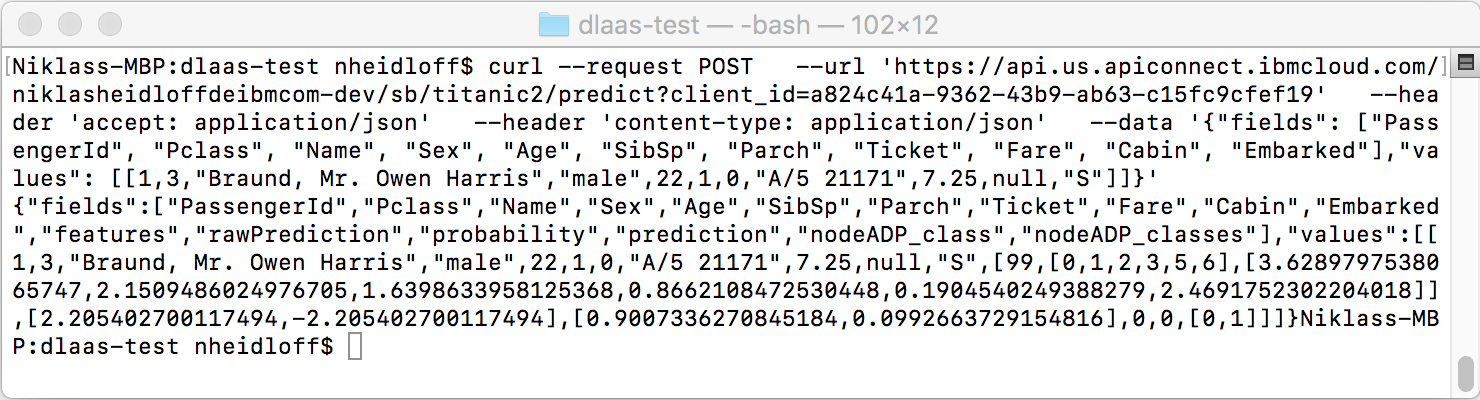

The screenshot shows the endpoint developers can use to access the deployed model via HTTP. In order to protect the endpoint, the credentials of the machine learning service are needed. With these credentials a bearer token is read that is needed for the actual prediction endpoint invocation.

While this mechanism works for smaller teams and projects I’d guess that at some point you’d want API management capabilities so that developers don’t have to have the credentials of the machine learning service and so that you can better track the REST API invocations.

That’s why I have built a little demo that shows how to use API Connect on top of the Watson Machine Learning endpoint.

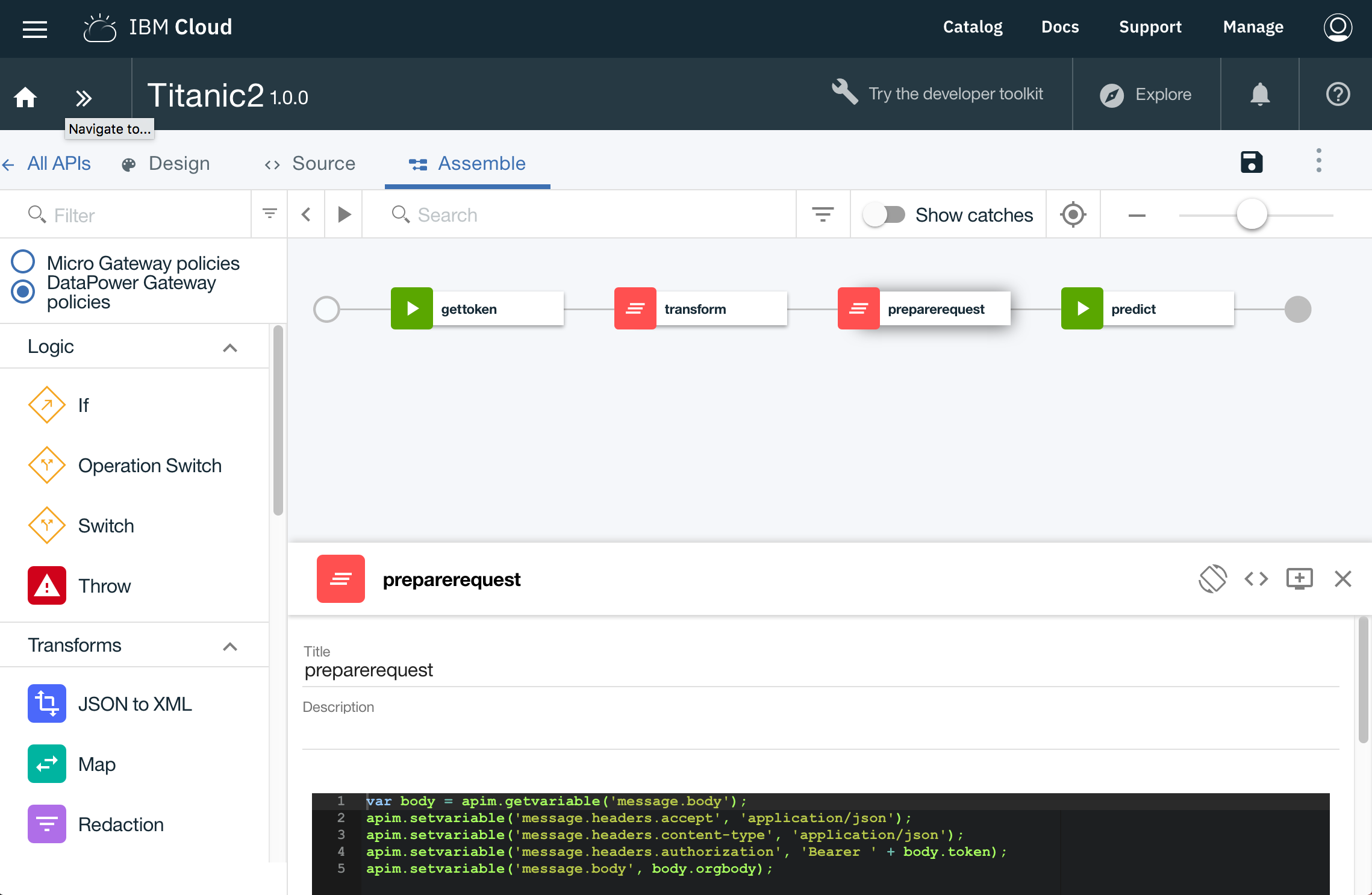

First I created a new API with only one POST REST API. In the assembly of this API there are four steps. First the token is read from Watson Machine Learning. This requires the machine learning credentials. In the second step the received token as well as the original content in the body field are put in a message JSON object. This message object can be accessed in the third step where the headers for the second invocation are set. In the last step the actual prediction endpoint is invoked.

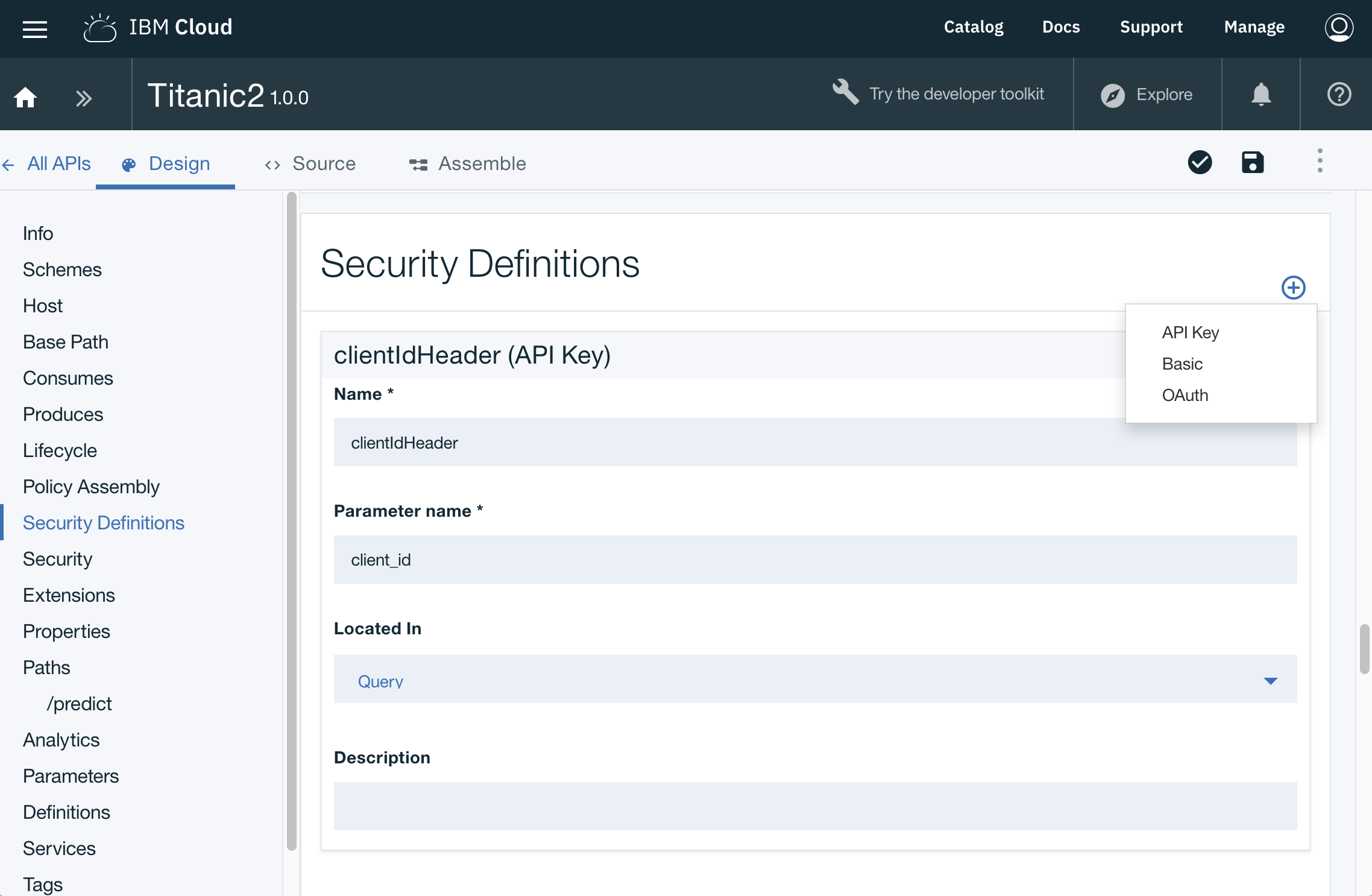

For the demo I secured the API with a client id only which developers can request via a portal. You could also use additionally secrets, OAuth and other security mechanisms.

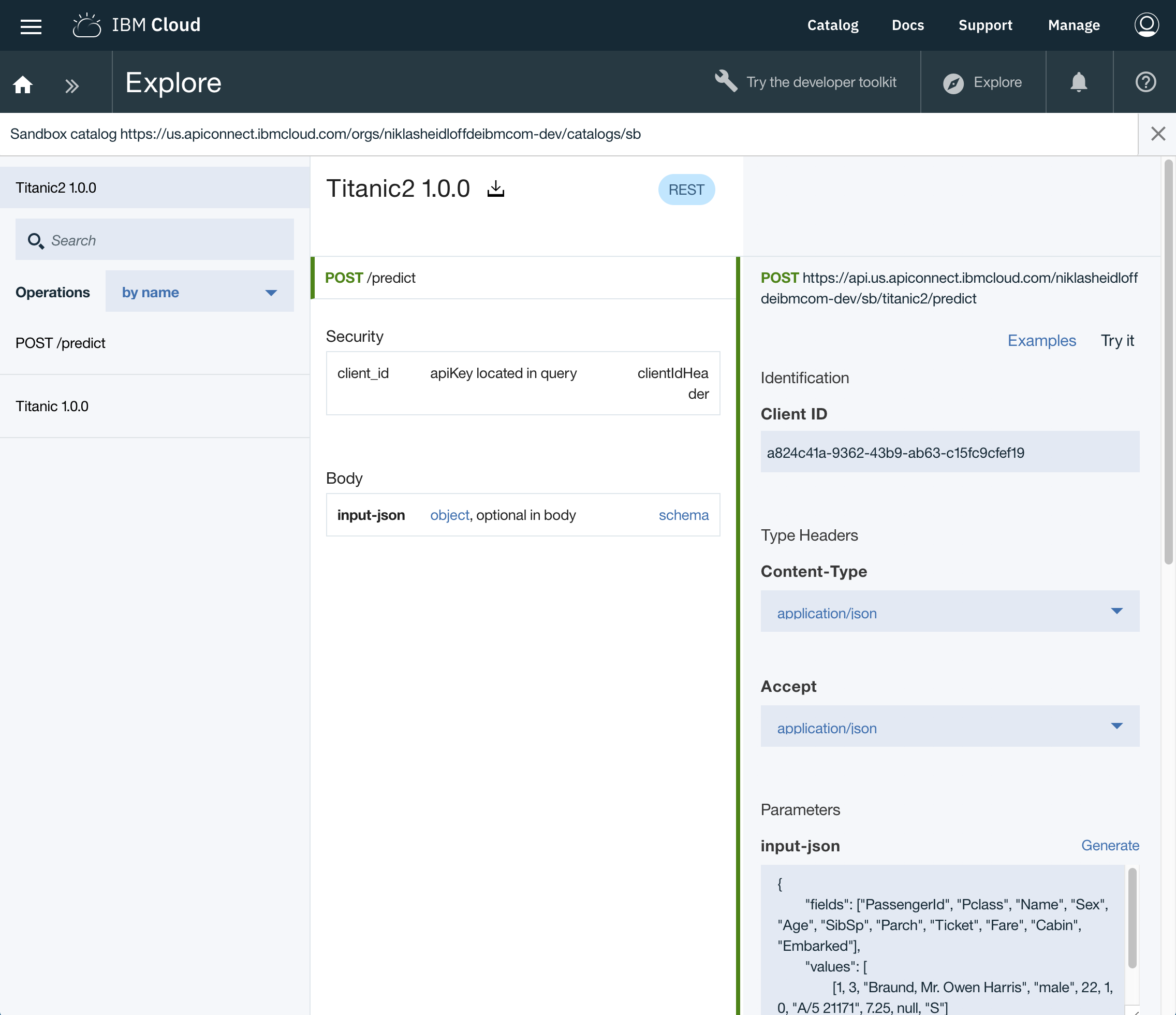

From the portal developers invoke the APIs.

Since not everything fits on the screenshot above, here is another screenshot from a curl command.

To find out more about Watson Machine Learning check out the IBM Data Science Experience. More information about API Connect can be found in the documentation and the available tutorials.