Large Language Models can improve the user experience of virtual assistants like Watson Assistant by providing answers rather than lists of links. With Watson Assistant’s ‘Bring your own Search’ feature these generative capabilities can easily be added via OpenAPI and custom HTML responses.

Scenario

Let’s look at a sample scenario again which was explained in the previous posts:

The goal is to answer the following question:

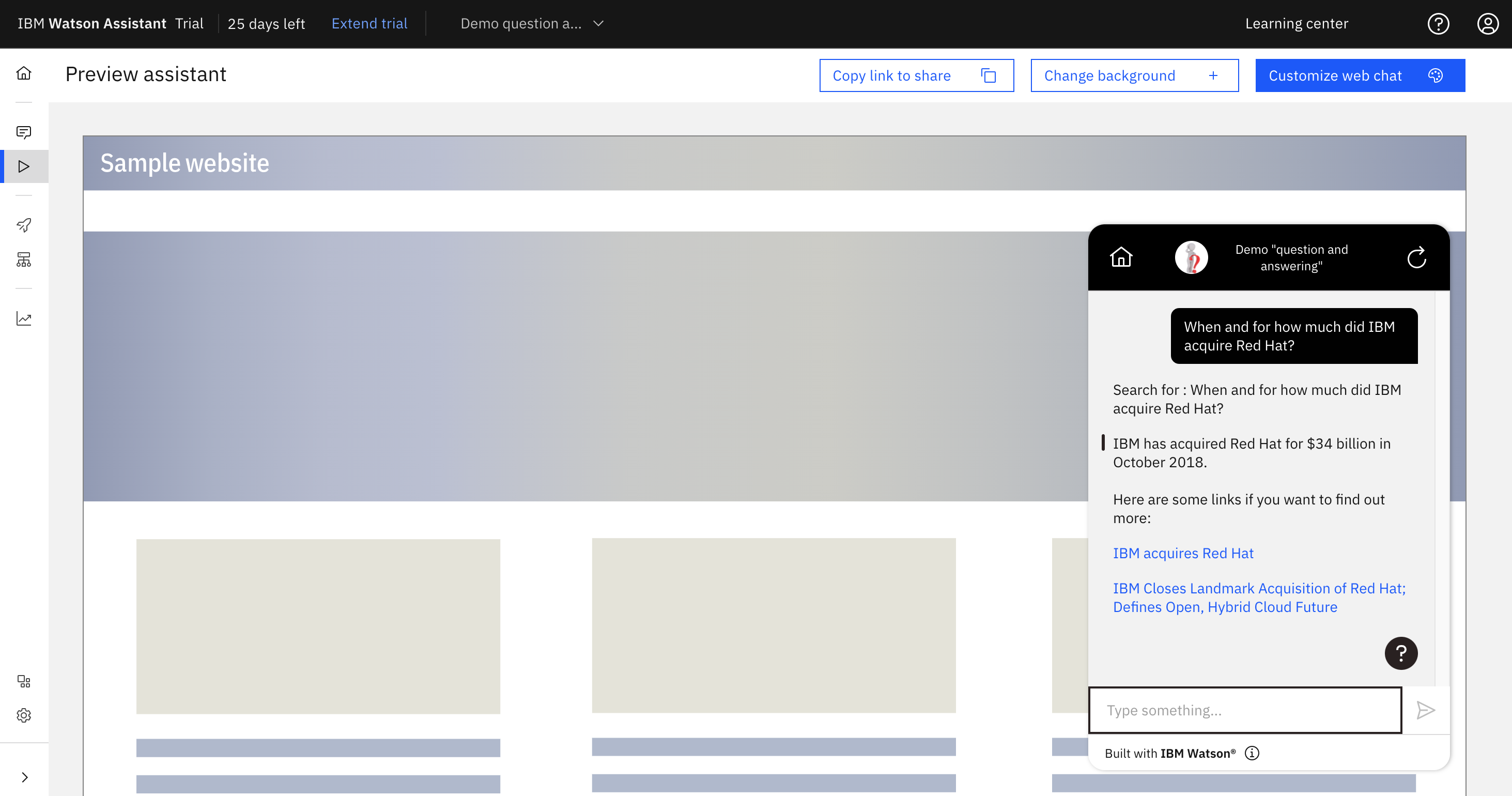

“When and for how much did IBM acquire Red Hat?”

Without passing in context the Large Language Model returns an answer which is not fully correct (‘$39 billion’).

“$39 billion in cash and stock in 2015, with IBM acquiring Red Hat for $34 billion in cash and stock in 2015”

When passing in context from a previous full text search, the answer is correct.

“IBM acquired Red Hat in October 2018 for $34 billion”

Architecture

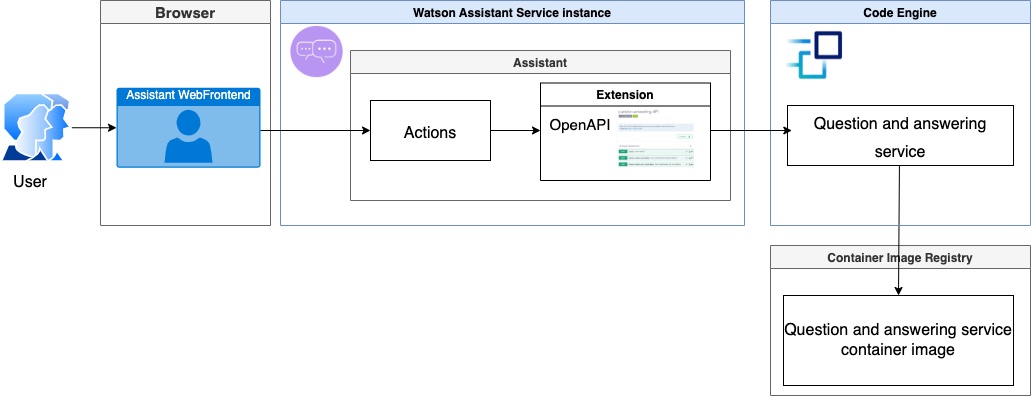

The following diagram explains the architecture.

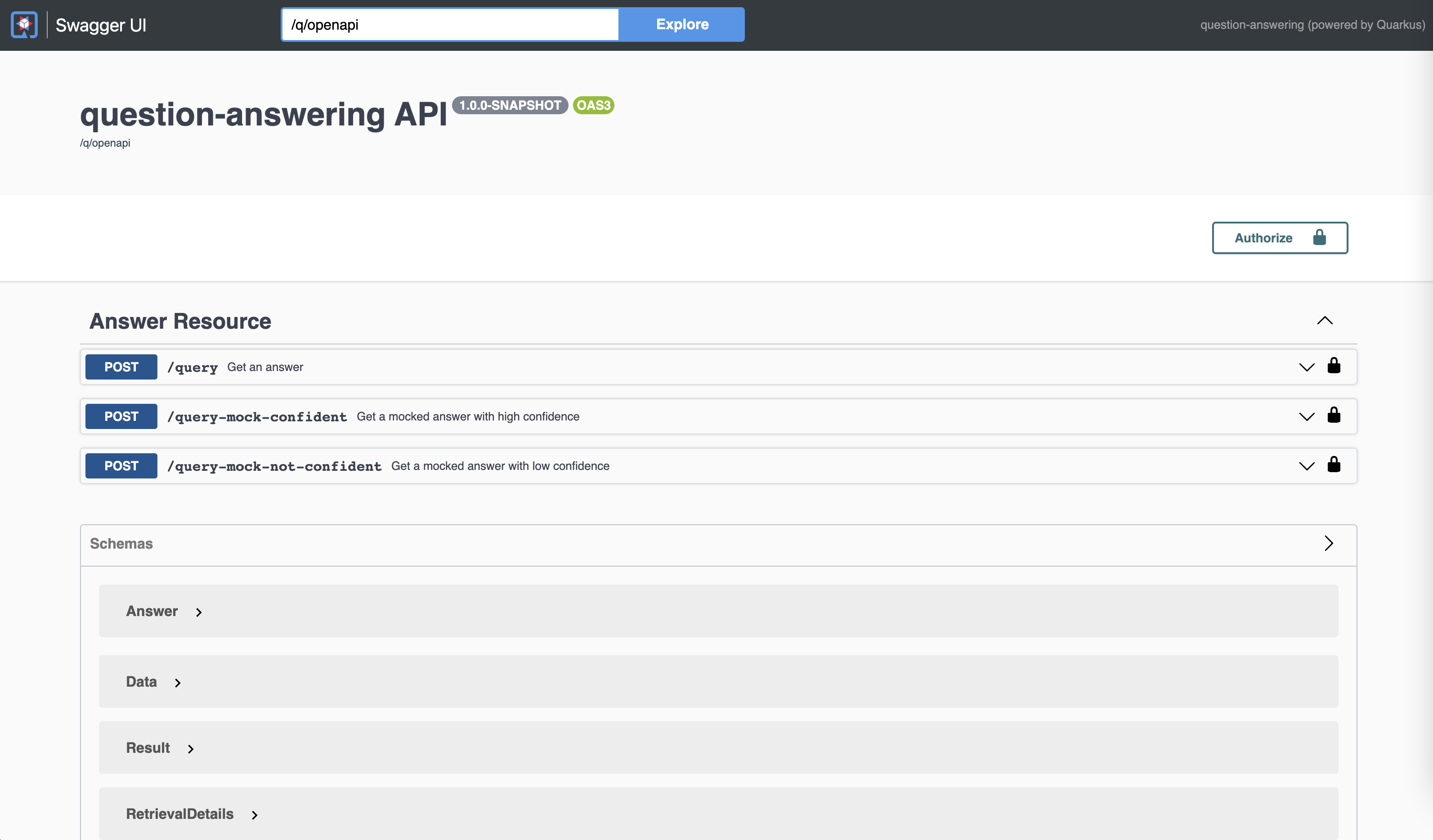

The ‘Question Answering’ service provides a REST API documented via OpenAPI (formelly known as Swagger). The /query endpoints implement the same interface as Watson Discovery.

Bring Your Own Search

IBM Watson Assistant comes with an extensibility mechanism, called ‘Bring your own Search’. This allows integrating any system which provides REST APIs documented via OpenAPI.

The screenshot at the top shows how the one answer is displayed plus the links to the documents which were passed in as context.

I’ve open sourced a sample that leverages PrimeQA and the FLAN-T5 model (via Model as a Service) to provide an answer.

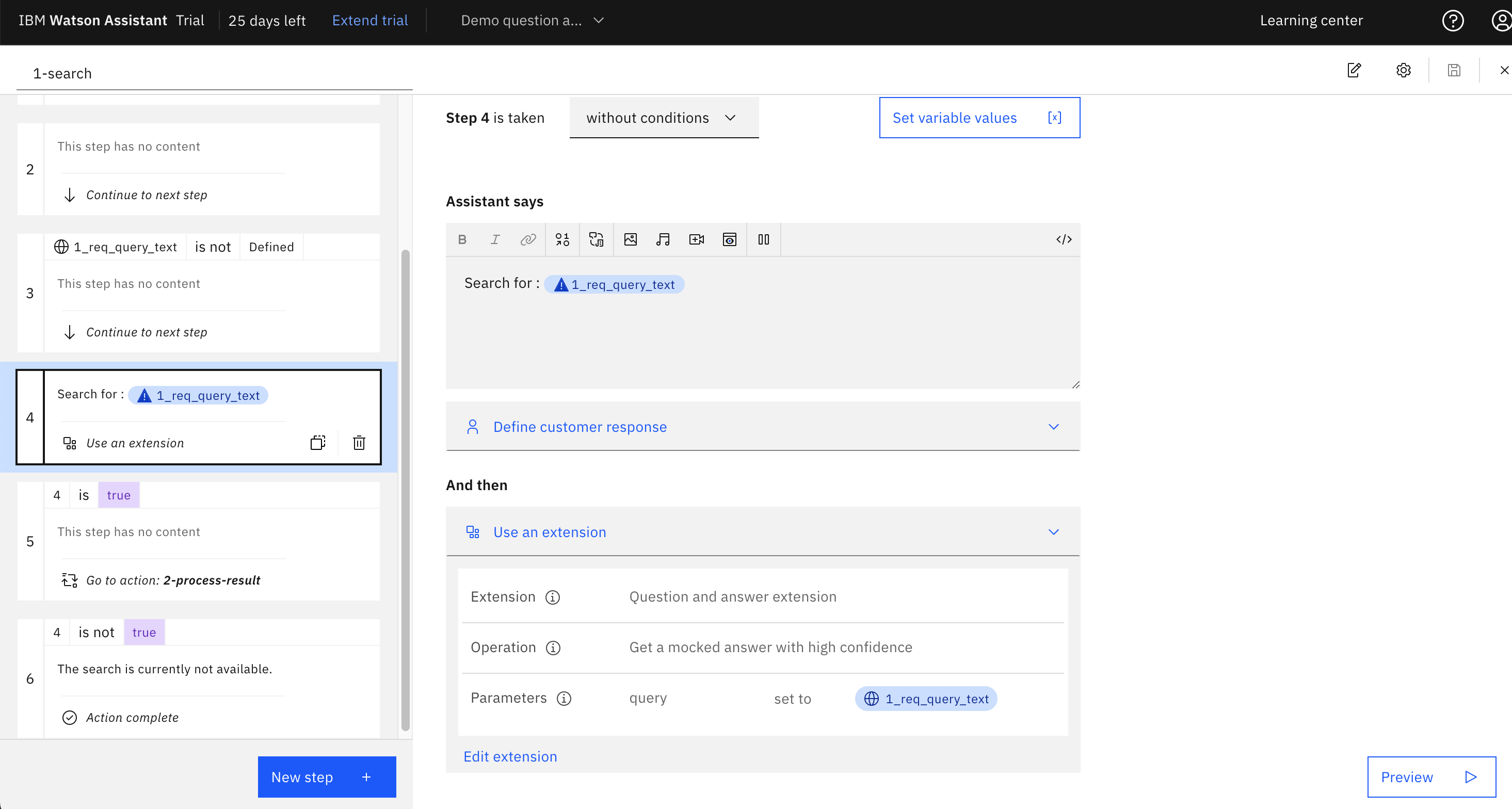

My colleague Thomas Südbröcker has integrated this extension in Watson Assistant. Read his documentation. He provides a starter kit which consists of a set of actions that can be imported into Watson Assistant. The first action invokes the endpoint of the sample ‘Question Answering’ endpoint.

Resources

- Bring your own search to IBM Watson Assistant

- The Setup of Bring Your Own Search (BYOS) for a Question Answering Service in Watson Assistant

- Sample code: question-answering

- Bring your own Search example

- Generative AI for Question Answering Scenarios

- Generative AI Sample for Question Answering

- Using PrimeQA For NLP Question Answering

- Finding concise answers to questions in enterprise documents