Last week at StrataHadoop Rod Smith and David Fallside demonstrated project Nitro from IBM Emerging Technologies allowing business users to analyze big amounts and different types of data including real-time data. In the optimal case business users can do this without any help but sometimes collaboration with data scientists is necessary who provide the necessary code that can then be consumed in Nitro.

As I started to learn about big data and analytics only recently I had and have to learn about these topics, for example about other roles, other technologies and other programming languages. In the big data world there is the role of a Data Scientist. Here is how Wikipedia defines this role:

“Data scientists use the ability to find and interpret rich data sources; manage large amounts of data despite hardware, software, and bandwidth constraints; merge data sources; ensure consistency of datasets; create visualizations to aid in understanding data; build mathematical models using the data.”

As far as programming languages data scientists use a variety of languages which includes also for application developers familiar languages like Java and Scala, but as far as I can see the two mostly used languages are Python and R which have pros and cons.

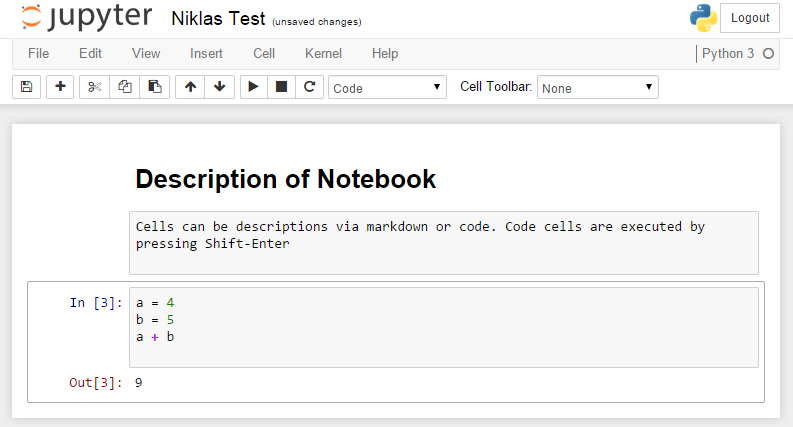

Python code can be written, documented and run easily in IPython Notebooks which are essentially web based IDEs for data scientists and very popular these days. Many universities are using them and the number of notebooks on GitHub is growing very fast. As example check out how to use Python to see how the Times writes about men and women and how to find out how clean restaurants are in San Francisco.

To learn more about IPython Notebooks check out these tutorials, the A Programmer’s Guide to Data Mining or the videos on the IPython website.

There are several ways to run IPyton Notebooks on IBM Bluemix. My colleague Jean Francois Puget blogged recently about how to deploy notebooks to Bluemix via Docker within minutes. I’ve followed his instructions and it was really easy. So if you want to learn more about this technology just set it up on Bluemix and give it a try.