I’ve open sourced a sample showing how to steer Anki Overdrive cars via Kinect and IBM Bluemix. The sample requires the Node.js controller and MQTT interface that I had open sourced previously. The sample is another alternative showing how to send commands to the cars in addition to Watson speech recognition.

Check out the project on GitHub.

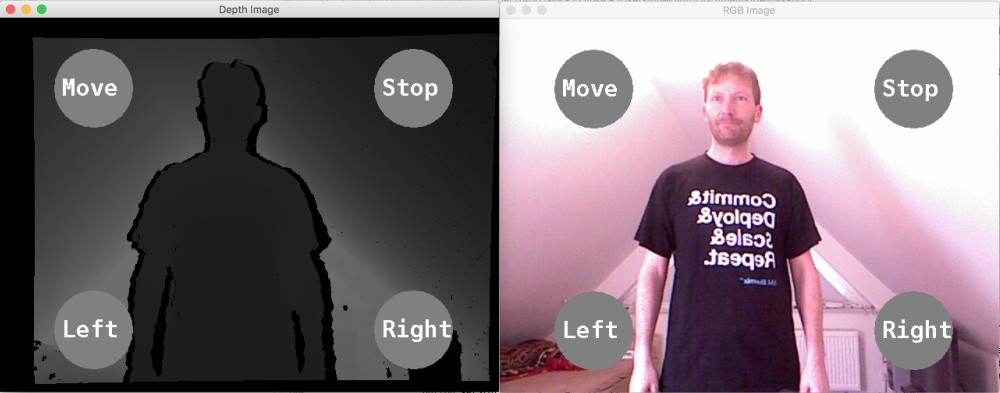

The project contains sample code that shows how to send MQTT commands to IBM Bluemix when ‘buttons’ are pressed via Kinect. Buttons are pressed when, for example, you move hands over them and wait for two seconds. The project is configured so that this only works when hands are between 50 cm and 1,00 m away from the Kinect. The distance is measured via the Kinect depth sensor.

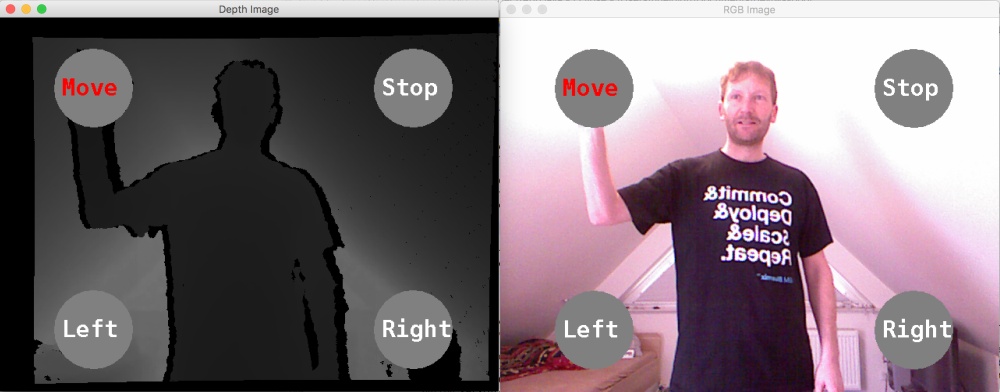

The left picture shows the depth information, the right picture is an RGB image.

When a hand is moved above a button, the state of the button changes to ‘pressed’ and if the hand is still above the button two seconds later, the appropriate action is invoked.

Here is a picture of me driving the cars via Kinect.

Here is the series of blog articles about the Anki Overdrive with Bluemix demos.