In order to train the object detection model for my sample TensorFlow Object Detection for Anki Overdrive Cars, I used a custom Docker container. I’ve extended the description of my repo to also document how Fabric for Deep Learning (FfDL) can be used to run this container.

Fabric for Deep Learning (FfDL) is an open source extension for Kubernetes to run deep learning workloads. It can be run in the cloud or on-premises. It supports frameworks like TensorFlow, Caffe and PyTourch, it’s supports GPUs and can be used to run distributed trainings. Watson Deep Learning as a Service uses FfDL internally.

FfDL supports various deep learning frameworks out of the box. For TensorFlow Object Detection however this is not sufficient since additional libraries are required.

Fortunately it turned out that you can also bring your own containers and use them for trainings on FfDL. Until recently this was only possible when compiling FfDL before deploying it, but now this functionality is also available with the standard installation.

Here is a sample manifest.yml file. The output is stored in IBM Cloud Object Storage.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

name: Niklas OD

description: Niklas OD

version: "1.0"

gpus: 0

cpus: 4

learners: 1

memory: 16Gb

data_stores:

- id: test-datastore

type: mount_cos

training_data:

container: nh-od-input

training_results:

container: nh-od-output

connection:

auth_url: http://s3-api.dal-us-geo.objectstorage.softlayer.net

user_name: xxx

password: xxx

framework:

name: custom

version: "nheidloff/train-od"

command: cd /tensorflow/models/research/volume && python model_main.py --model_dir=$RESULT_DIR/training --pipeline_config_path=ssd_mobilenet_v2_coco.config --num_train_steps=15000 --alsologtostderrdock

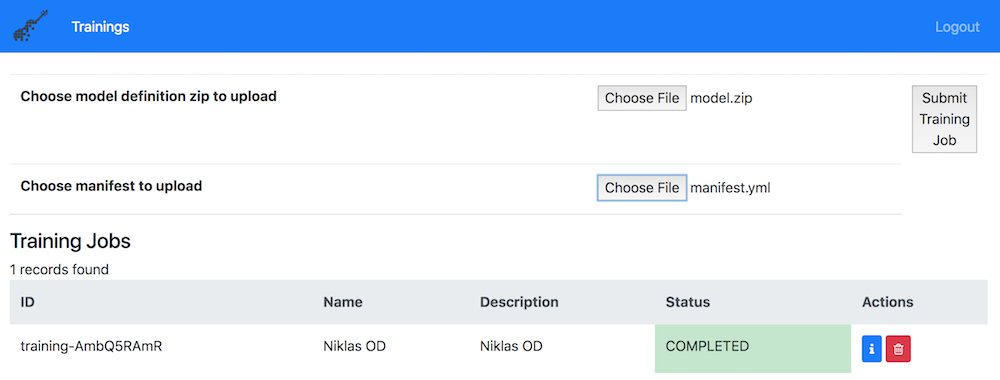

At the bottom of the manifest.yml, the Docker image is defined as well as the command to start the training. To initiate the training, a CLI can be used or a web application as shown in the screenshot.

Thanks a lot to my colleagues Tommy Chaoping and Animesh Singh for their help to get this sample to work.